But The AI Said Everything Is Fine?

It's a spring Tuesday morning in your security operations center. A great day for stopping threats. An analyst logs in, opens their XDR console, and ...

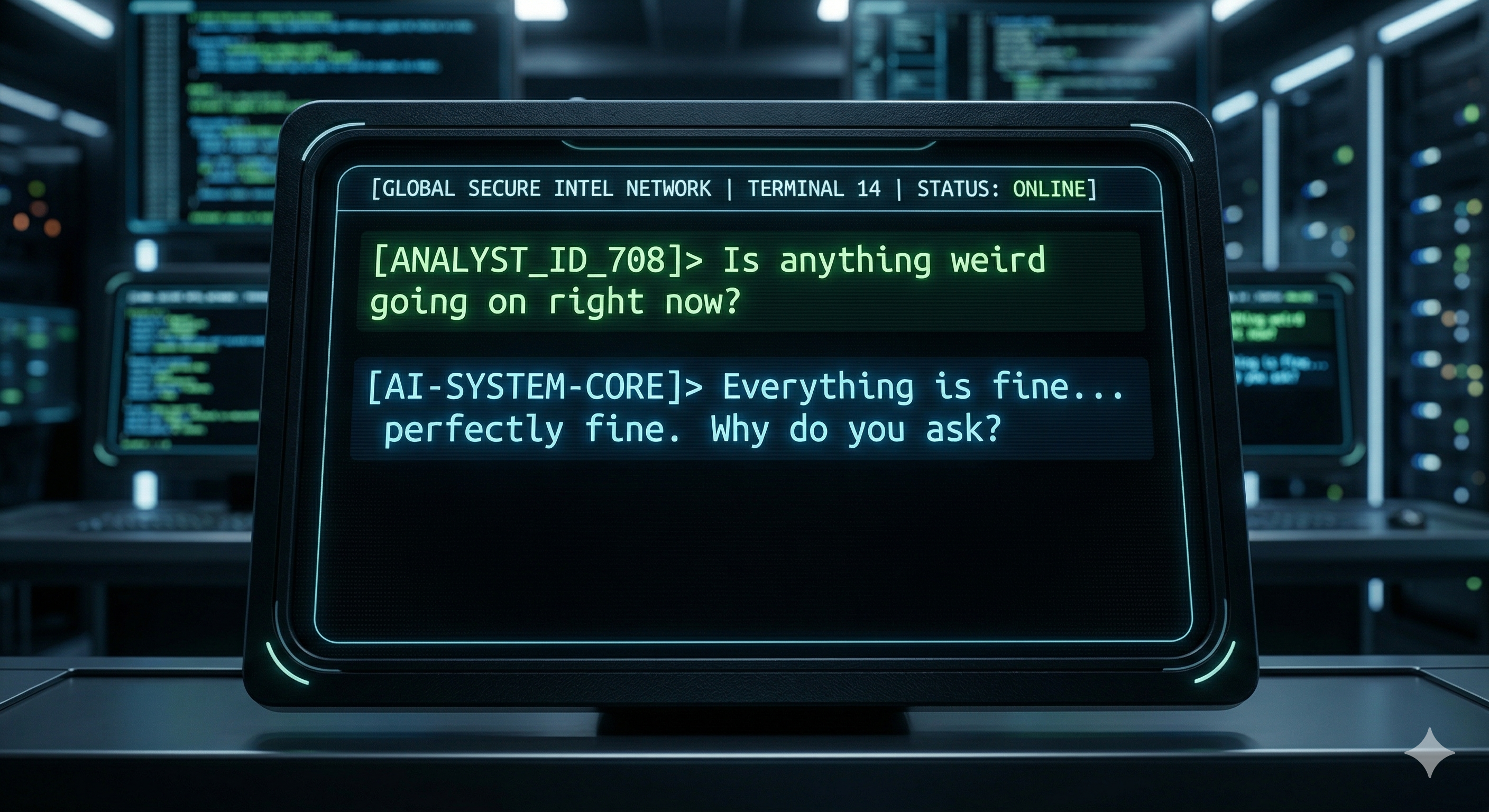

It's a spring Tuesday morning in your security operations center. A great day for stopping threats. An analyst logs in, opens their XDR console, and types into the AI assistant: "Is anything weird going on right now?"

The tool responds in the negative. A few low-severity alerts and false positives are summarized, nothing that really piques the analyst's interest. The analyst reviews the output, marks the items reviewed, and moves on to the next task. The whole process takes about four minutes.

That workflow is not laziness. It is not incompetence. In many organizations right now, it is just a Tuesday.

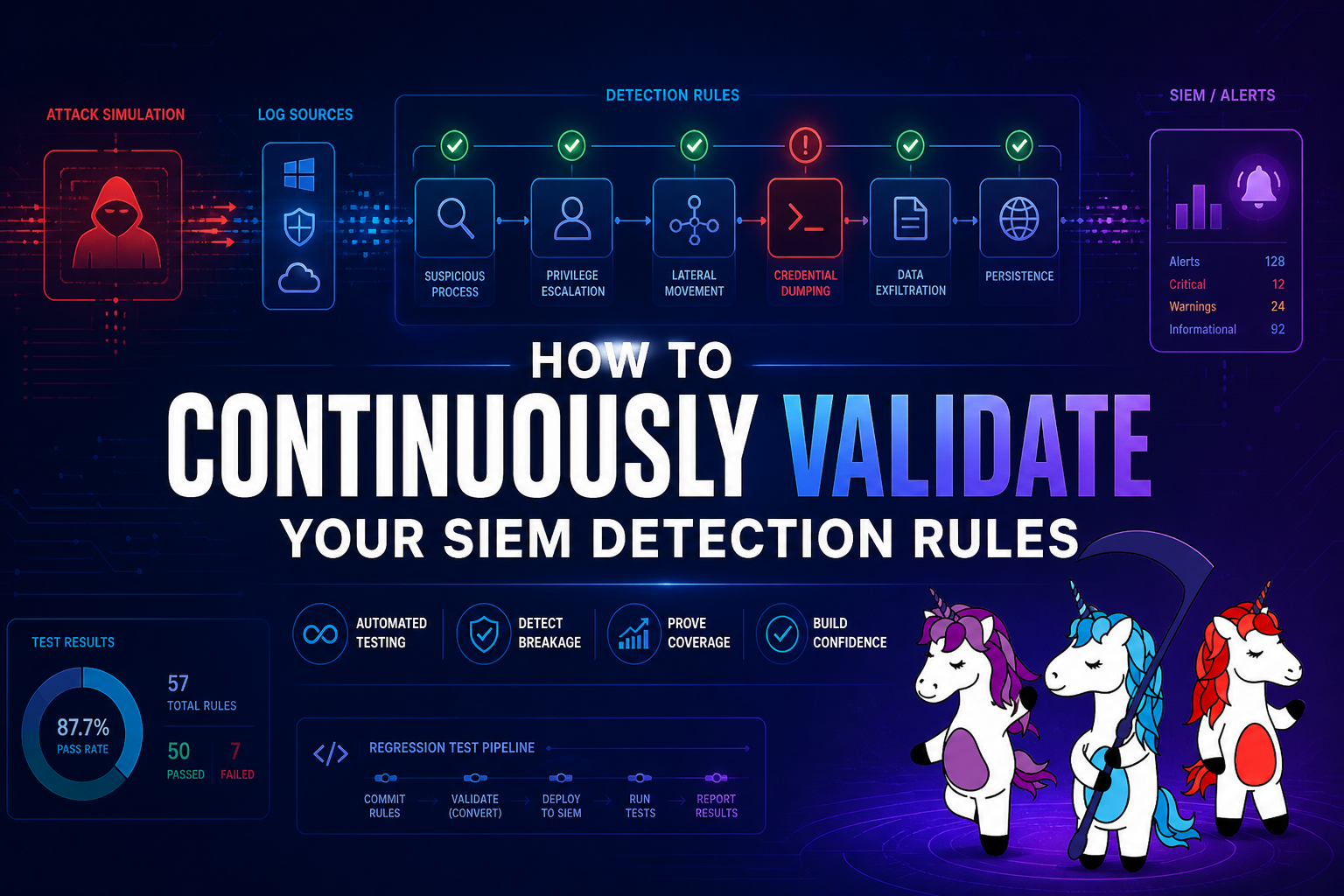

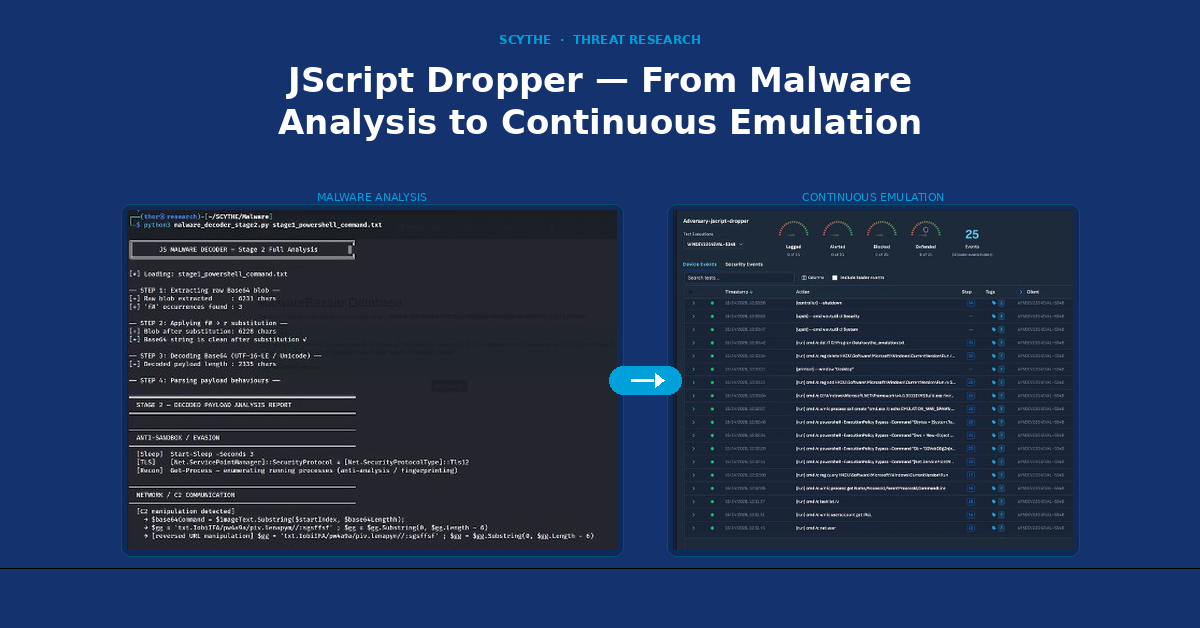

Over the last six months, something has shifted in the way many security analysts approach their work, and it has happened quickly enough that most programs have not stopped to examine it. The rise of AI-assisted tooling within SIEM platforms, XDR solutions, and threat intelligence products has fundamentally changed the analyst's default posture from proactive and suspicious (someone who goes looking for threats) to reactive and overly trusting in the tools. Specifically, the AI/LLMs built into them, in my opinion. That is a concerning change in behavior and a significant change in outcome. This might sound like hyperbole, but it's not. I have seen this very behavior play out a handful of times during PurpleTeam Engagements. The title of this post is not just some catchy phrase or clickbait.. I have had analysts participating in a PTE say those exact words to me... The AI said everything is fine. Meanwhile, I KNOW our team is actively performing multiple TTPs during that timeframe.

Now, before you dismiss this article as just another write-up bashing AI/LLM usage, this is NOT an argument against AI in security operations. I believe the tools are genuinely useful, the volume problems they help solve are real, and the best security teams in the industry are getting meaningful value out of them when used properly. There is no putting this hypothetical genie back in the bottle. But there is a difference between using AI to do your job better and using AI to replace the part of your job that requires judgment. The first is a force multiplier. The second is a liability that only shows up when something goes wrong.

The question worth asking right now is not whether your team is using AI. It is whether they still have the instinct to hunt without it.

Remember When..

Threat hunting has never been a clean process. The best analysts were defined not by the tools they used but by the questions they asked before touching a single tool. They formed a hypothesis, something like "I think a threat actor is using living-off-the-land binaries to collect and exfiltrate data," and then went looking for evidence to prove or disprove it.

That sequence matters: instinct first, data second.

That instinct did not come from a certification or a vendor onboarding session. It came from time spent reading logs, learning what normal looked like in a specific environment, and developing pattern recognition that was sharp enough to notice when something was slightly off. It was, in every meaningful sense, a craft.

The muscle memory built from that kind of work is hard to overstate. An analyst who has spent years reading Windows Security event logs knows what an unusual user behavior pattern looks like before they run a single query. An analyst who has studied their organization's baseline network traffic can spot lateral movement not because an alert fired, but because something felt wrong. Call it experience or call it a vibe. The truth of the matter is that intuition is the result of deliberate, repeated engagement with organizational data over time.

Good threat detection programs reflected this. Hunt cadences had documented methodologies. Analysts were expected to explain not just what they found, but how they looked for it. The investigative process was treated as something worth preserving and improving, not just a means of closing tickets. Structured hunts that require analysts to document their methodology, including what they looked for and how they looked for it, serve two purposes: they keep the foundational skills active, and they build institutional knowledge that improves future hunts. That documentation also creates an audit trail that enables evaluation of investigative quality, not just speed.

That standard produced analysts who could adapt. When a novel technique emerged, they did not need a new detection rule to find it. They could reason about attacker behavior, map it to observable artifacts, and hunt for it using the fundamentals they had already internalized. That adaptability is what separates a good analyst from someone who can operate a dashboard.

The Shift From Hunter To Prompter

The tools have changed. That part is not a criticism; it is just true. Progress is the name of the game in our world. SIEM platforms added AI-assisted triage. XDR solutions shipped with natural language interfaces. Vendors began marketing the ability to ask your security stack a question and get an answer in plain English. For teams drowning in alert volume, that sounded like relief, and in many cases, it genuinely is.

The problem is not the tooling. The problem is what happened to the workflow around it.

In a healthy hunting program, AI tooling compresses the time it takes to do what analysts were already doing. It surfaces context faster, normalizes data across sources, and reduces the cognitive overhead of manually correlating events. The analyst still leads. The AI accelerates.

What started showing up instead was a different pattern. Analysts began opening their tools and asking broad, open-ended questions: "Did anything suspicious happen today?" or "Were there any threats in the last 24 hours?" and then treating whatever came back as the answer. The investigative loop was replaced by a query and response cycle. If the AI did not surface something, the assumption has become that there was nothing to find. What happened to trust but verify?

This shift has largely gone unnoticed at the leadership level because the output looks the same. Tickets are being closed. Reports are being generated. The metrics are green. But the underlying behavior has changed significantly. Analysts who had previously spent time in raw log data are now spending that time reading AI-generated summaries instead. Hypothesis driven hunting has given way to waiting for the tool to tell them where to look. We have heard the phrase "There are no alerts in X tool, so there is nothing to investigate".

It is worth being clear: this is not a failure of character or motivation. These analysts are responding rationally to the incentives before them. They have more alerts than hours in a shift, tools that promise to help, and managers measuring throughput. Those common metrics are compounded with the numerous collateral duties everyone has picked up during this last wave of downsizing. Everyone is doing more with less. The behavior makes sense given the environment. That is precisely what makes it worth examining at the program level rather than the individual one.

Why This Is Actually Dangerous

The AI Does Not Know What It Does Not Know

AI tools in security products are, at their core, are built for pattern-matching and people-pleasing. They are trained on known bad behavior, labeled datasets, and threat intelligence that existed before they were deployed. That means they are well-suited to identifying techniques and patterns that are already understood. They are much less suited to finding things that do not yet have a name.

Advanced adversaries know this. Staying outside detection thresholds, using legitimate tooling, and blending in with normal administrative behavior is not a new concept in offensive operations. These techniques have been effective for years precisely because they do not look like the known-bad patterns that detections are built around. An AI tool that has never seen a specific tradecraft variation is not going to flag it, and it is not going to tell the analyst it might have missed something. Sure, logs will be generated, but if those never meet the required threshold, no real alert will signal that anything is wrong.

The danger is not that the AI gets it wrong. The danger is that it says nothing, and the analyst interprets silence as safety. The absence of an alert is not the same as the absence of a threat, and that distinction matters more as analysts spend less time manually verifying it.

The Prompting Problem

There is a meaningful skill gap in how security practitioners interact with AI tools, and it is not being addressed in most organizations.

Asking "Did anyone interact with the Event Log?" is not a hunt. It is a question with no context, no scope, and no hypothesis behind it. The AI has no way to know what the analyst is worried about, what the environment's baseline looks like, or what kind of attacker behavior is relevant to the current threat landscape. The output it produces in response to a vague question will be equally vague.

Effective prompting in a security context looks more like: "Show me instances in the last 72 hours where a user account queried the Security Event Log for instances of Event ID 4624, specifically from user accounts not in the I.T. administrators OU." That is a hypothesis translated into a query. The analyst brought the instinct; the AI brought the processing power. That collaboration produces something useful.

The gap between those two approaches is significant, and most organizations are not training for it. Prompting is being treated as intuitive rather than as a learnable, improvable skill. The result is that analysts use powerful tools in ways that yield mediocre results and then draw conclusions from those results.

Skill Atrophy Is Real

Skills that are not practiced degrade. This is true in every discipline, and security analysis is not an exception.

Analysts who stop reading logs will, over time, lose fluency with them. The ability to recognize anomalies in a sea of event data cannot be switched back on after months of reading summaries. For senior analysts, this is a slow erosion. For junior analysts who entered the field after AI tooling became standard, the foundational skill may never fully develop.

This creates a structural vulnerability that is easy to underestimate. AI tools have availability dependencies, update cycles, and coverage gaps. Vendor contracts change. Tools get swapped out. And occasionally, the most interesting attacker behavior looks exactly like something the current model was not trained to catch. In those moments, the analyst falls back on fundamentals. If those fundamentals are not there, the fallback fails. As a wise friend once told me (I’m looking at your Tyler), the normal actions of most administrators often look a lot like those of a threat actor working in your environment. They run commands and scripts at odd hours, use scheduled tasks, obscure BAT files in unusual directories, and generally stand out compared to normal user traffic. But if you have no underlying understanding of the environment… everything looks bad. Having that intuition and understanding of an environment and how its users function is not currently quantified. It just has to be intuited.

The goal is not to make analysts work harder than necessary. It is to ensure that the human judgment AI tools are supposed to augment, actually exist, and stay sharp.

The Organizational Blind Spot

Most security organizations are measuring the wrong things when it comes to AI-assisted analyst work. Ticket closure rates, mean time to respond, and alert triage volume are all reasonable operational metrics. They tell you something useful about throughput. What they do not tell you is anything about how analysts arrived at their conclusions, whether investigations were thorough, or whether the team would catch something the AI missed. Those things are harder to measure, so they tend not to get measured.

This creates a quiet incentive structure in which speed is rewarded, and depth is invisible. An analyst who closes 10 alerts in an hour by accepting AI summaries, according to the available metrics, appears more productive than one who spends 2 hours manually tracing a suspicious authentication chain. The second analyst may have found something much more significant, or built the kind of pattern recognition that will pay off next quarter. The metrics do not capture that.

Vendor messaging has compounded this. AI-assisted tools are sold as force multipliers, and that framing has been absorbed into how leadership thinks about analyst capacity. The implication is that analysts can handle more with less effort, which is true in the narrow sense that AI reduces certain kinds of manual work. The part that does not always get discussed is that effective use of those tools still requires the analyst to bring informed judgment to the interaction.

The organizational blind spot is not malicious. Leadership is trying to get the most out of constrained teams, budget, and expensive tooling. But when no one is auditing how AI is being used, only that it is being used, the quality of that usage tends to drift toward the path of least resistance.

What Good AI-Augmented Hunting Actually Looks Like

The goal was never to choose between human analysts and AI tools. The best security programs are already figuring out how to use both well, and the results are genuinely worth highlighting.

When AI tooling is used as an accelerator rather than an oracle, it produces better outcomes than either the analyst or the tool could achieve on its own. The analyst brings a hypothesis, environmental knowledge, and an understanding of what the adversary is likely trying to accomplish. The AI brings the ability to process enormous data volumes, correlate across sources, and surface relevant artifacts faster than any human could. That division of labor is well-suited to the problems security teams face.

This combination of skills looks like an analyst deciding to hunt for credential access activity related to a specific threat actor profile, then using AI tooling to rapidly correlate log data across identity, endpoint, and network sources and surface relevant events. The AI did not decide what to look for. The analyst did. The AI made the search faster. That is the model.

Prompting as a deliberate skill is also starting to get the attention it deserves in more mature programs. Analysts who are trained to construct specific, hypothesis-driven queries, to follow up on AI responses with progressively narrower questions, and to treat AI output as a starting point for investigation rather than a conclusion, consistently produce better results. This is a teachable skill set, and organizations that invest in it get significantly more value from their tooling and their team.

The picture that emerges from programs getting this right is not a story about AI replacing judgment. It is a story about AI handling volume so that judgment can be applied where it matters most.

TLDR

The threat landscape does not pause for tool updates, retraining cycles, or vendor roadmaps. Adversaries adapt continuously, and the techniques most likely to cause real damage are often the ones that look the least like what a detection model was built to catch. That gap between what AI tools know and what attackers are actually doing is not a flaw that will eventually get patched. It is a permanent feature of the problem space. The human analyst exists, in part, to live in that gap.

None of that makes AI tooling less valuable. It makes the analyst more important, not less. The organizations that will fare best are not the ones that buy the most capable tools or the ones that resist AI adoption out of principle. They are the ones who treat the human and the tool as genuinely complementary, with the AI handling volume and the analyst handling judgment, and neither expected to do the other's job.

That balance requires intention. It does not happen naturally. Left to its own inertia, any workflow will drift toward whatever is fastest and easiest in the short term. For security programs, that drift has a cost that tends to remain invisible until it becomes very visible in the form of a breach.

The analysts who will define the next era of this field will not be the ones who ask the most questions of their tools. They are going to be the ones who know which questions to ask, understand why those questions matter, and can still pull the thread manually when the tool comes up empty. That combination of capability and curiosity backed by craft is what no AI product currently ships with.

It still has to be built. It still has to be practiced. And it is still worth protecting.

Stay Frosty, Cyber Nerds.

-Trey

Latest Posts

Sign up to our newsletter

Sign up here to receive news and updates.