Why Legacy BAS Vendors Fall Short in AEV

Opinion Controls Validation Platform & Product Legacy BAS vendors are rebranding as AEV platforms. But slapping a new label on atomic test ...

Marc Brown

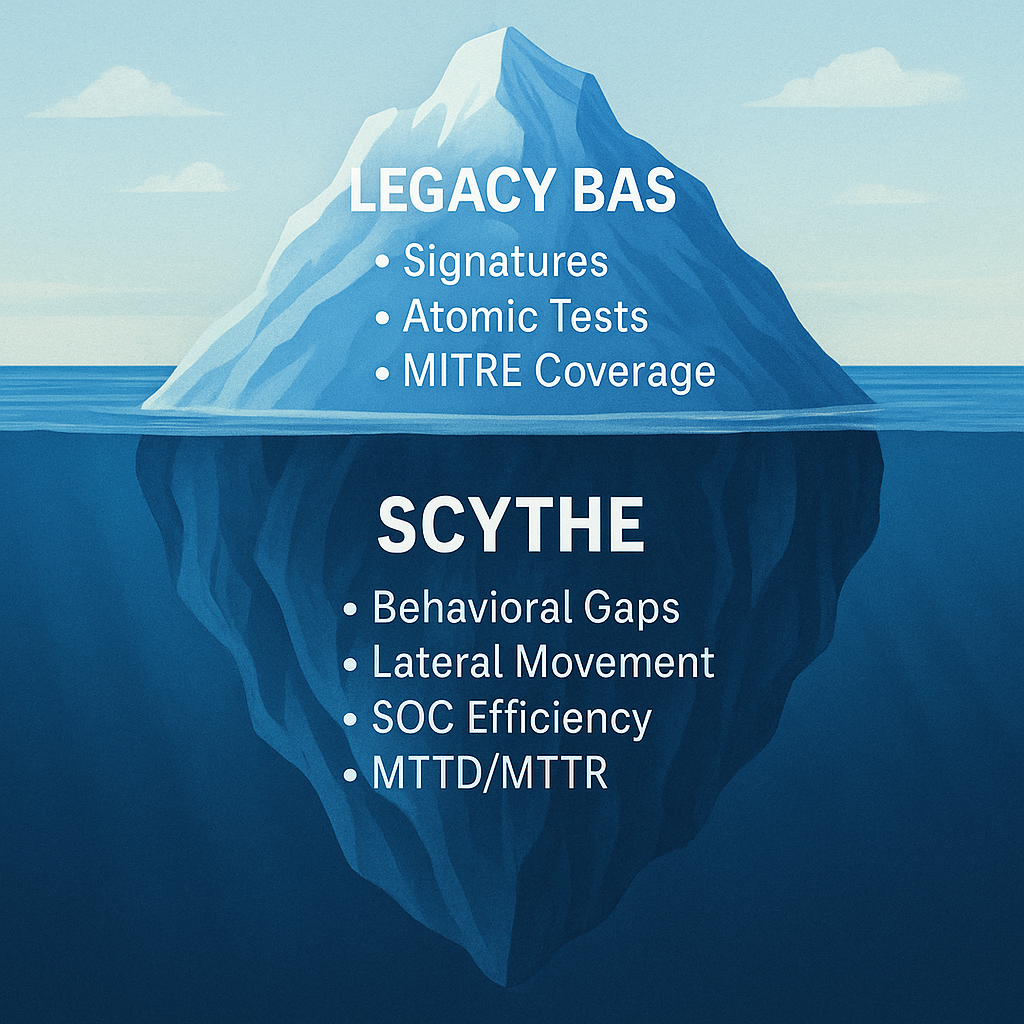

Legacy BAS vendors are rebranding as AEV platforms. But slapping a new label on atomic test scripts doesn't make them adversary emulation. The architecture is fundamentally different — and the metrics prove it.

SCYTHE · April 2026 · 14 min read

As Continuous Threat Exposure Management (CTEM) becomes the operating model for mature security programs, Adversarial Exposure Validation (AEV) has emerged as its critical validation layer — the mechanism that proves defenses work against real-world adversary behavior, not just in theory.

Predictably, every legacy Breach and Attack Simulation vendor has noticed. The market is flooded with BAS platforms rebranding as "AEV solutions" — same architecture, same atomic tests, same script-based execution, new marketing page. The pitch sounds right. The product underneath hasn't changed.

This post breaks down exactly why legacy BAS architectures can't deliver true AEV, where the gaps show up in the metrics that matter, and what a purpose-built AEV platform actually does differently.

The Bottom Line

AEV requires realistic, multi-stage attack campaigns that test detection and response across people, process, and technology. Legacy BAS tests individual techniques in isolation using scripted, defanged atomic actions. The difference isn't incremental — it's architectural. And it shows up in every metric that matters.

The Architectural Gap: Why BAS Was Never Built for This

Legacy BAS platforms were designed to answer a simple question: does this control detect this technique? They execute a predefined atomic action — a single MITRE ATT&CK technique, using a defanged payload or known signature — and check whether the corresponding alert fires. Run a thousand of these in sequence and you get a coverage heatmap. That was a useful starting point. It's not AEV.

Real adversaries don't execute techniques in isolation. A ransomware operator doesn't run T1059.001 (PowerShell), check if an alert fired, then stop. They chain credential access into privilege escalation into lateral movement into data staging into encryption — adapting at each step based on what the environment allows, what defenses they encounter, and what access they've gained. The attack has context, sequence, and behavioral logic. Legacy BAS has none of these.

Legacy BAS: Atomic & Static

Executes individual techniques using scripted, defanged payloads tied to known signatures and IOCs. Each test runs independently — no chaining, no environmental awareness, no behavioral adaptation. Tests technology only. Results are pass/fail against predetermined rules.

AEV: Campaign-Based & Behavioral

Emulates realistic multi-stage adversary campaigns that chain techniques across the full kill chain — adapting to the environment, generating true-positive telemetry, and testing detection AND response across people, process, and technology. Results are measured in MTTD, MTTR, and validated detection posture.

Seven Metrics Where Legacy BAS Falls Short

AEV demands metrics that measure organizational readiness — not just tool configuration. Here's where the gap between legacy BAS and true AEV shows up most clearly.

Detection Coverage & Fidelity

Legacy BAS tools offer broad coverage by testing individual ATT&CK techniques, but the fidelity is shallow. Executing a defanged atomic test doesn't generate the same telemetry as a real adversary chaining techniques across your environment. The detections that fire against isolated scripts may not fire against a realistic campaign — because real attacks look different in context than they do in isolation.

AEV platforms deliver high-fidelity detection validation by executing realistic attack chains that generate true-positive telemetry — the same signals your SOC would see during an actual intrusion. This means coverage metrics reflect operational reality, not lab conditions.

A note on "MITRE ATT&CK coverage" as a metric

Chasing technique coverage as a checklist is misleading and wasteful. Teams burn hours treating it as a scorecard — testing techniques irrelevant to their actual threat landscape and creating a false sense of security that looks comprehensive on paper while masking operational gaps. Coverage density matters only when it's measured against the specific adversaries targeting your industry, using realistic campaign execution, not atomic tests.

Threat Preparedness

Measuring whether your organization can actually stop a phishing campaign, a ransomware operator, or an insider threat requires testing the full attack lifecycle — not individual techniques in a vacuum. Legacy BAS can't replicate how a phishing email leads to credential theft, which leads to privilege escalation, which leads to lateral movement, which leads to data encryption. It tests each link independently, never the chain.

The critical insight: a threat actor steals credentials to use them, not to celebrate. Their post-credential activity will look different on your hosts and networks than the initial theft. If you're not testing at that level — what happens after each step, across systems, against your actual operations — you're not measuring preparedness. You're measuring configuration.

Security Control Effectiveness

Legacy BAS delivers pass/fail outcomes based on signature detection or rule matches. A technique either triggered an alert or it didn't. That binary view misses the nuance that matters: Was the alert actionable? Did it generate enough context for triage? Did the automated response trigger? Was the SOAR playbook executed? Were the right people notified?

AEV tests how controls behave in context — whether alerts generate, detections log, and automated responses trigger across the full response chain. This uncovers misconfigurations, tuning gaps, and alerting blind spots that binary testing never surfaces. It also provides the evidence to demonstrate ROI on existing investments and identify which tools are underperforming.

Compliance Validation

Legacy BAS provides checkbox-style assessments — confirming that controls exist and basic techniques trigger expected alerts. That was adequate for older compliance frameworks. It's not adequate for NIST 800-53, TSA SD-02C, NERC CIP, DORA, or TIBER-EU — all of which now demand evidence of operational effectiveness, not just control existence. AEV produces the evidence that evolving regulatory standards require: validated detection, measured response, and demonstrated continuous improvement.

MTTD & MTTR

This is where the gap between BAS and AEV is most visible. Legacy BAS simulates isolated actions that don't reflect the full lifecycle of a real attack — so there's no meaningful way to measure how long it takes your team to detect a campaign, investigate the scope, and execute containment. MTTD and MTTR derived from atomic tests are disconnected from operational reality.

AEV enables precise measurement by executing end-to-end campaigns in production environments. MTTD tells you whether your tools caught it. MTTR tells you whether your team caught it — and how fast they responded. A detection that fires but sits unresolved for 72 hours surfaces a resourcing or readiness gap that better tooling alone won't fix.

SOC Efficiency

Legacy BAS checks whether an alert fired. It can't tell you whether the SOC triaged it correctly, escalated it appropriately, or acted on it at all. It measures tool output in isolation, ignoring the human and process dimensions that determine whether a detection becomes a contained incident or a missed breach.

AEV campaigns traverse multiple layers of the environment — endpoint, network, cloud, identity — testing whether controls detect activity at each stage, how alerts are prioritized, and how quickly the SOC responds. This measures not just tool efficacy but human and process performance under real pressure. It reveals bottlenecks, reduces alert fatigue, and identifies where resources should be allocated.

Vulnerability Impact Scoring

Legacy BAS assesses vulnerabilities based on static CVSS scores — theoretical severity that doesn't reflect real-world exploitability in your specific environment. A Critical-rated vulnerability that your EDR blocks at execution is not the same risk as a Medium finding that bypasses every control. AEV evaluates how vulnerabilities perform in adversarial scenarios — whether they enable privilege escalation, persistence, or data access — providing evidence-driven impact scoring that prioritizes remediation based on actual risk rather than theoretical severity.

The Full Comparison: Legacy BAS vs. AEV

| Capability | Legacy BAS | AEV (SCYTHE) |

| Test methodology | Atomic, script-based, isolated techniques | Multi-stage campaigns with behavioral logic |

| Environmental awareness | None — same script regardless of environment | Adapts to target environment and defenses |

| Payload realism | Defanged malware, known signatures, IOCs | Behavioral emulation generating true-positive telemetry |

| Kill chain coverage | Individual techniques tested independently | Full chain — access through exfiltration |

| What it validates | Technology (did the alert fire?) | People + process + technology (did anyone act?) |

| Detection fidelity | Low — scripted actions don't match real telemetry | High — campaigns generate production-grade signals |

| MTTD / MTTR measurement | Limited — no campaign lifecycle to measure | Precise — end-to-end campaign timing |

| SOC / human layer testing | Not measured | Triage, escalation, response workflows validated |

| Team collaboration | Automated — no team interaction | Red + blue + purple team workflows |

| Compliance evidence | Checkbox — controls exist | Operational — controls work under adversary pressure |

| CTEM Phase 4 readiness | Partial — technique-level only | Full operationalization |

| IT + OT/ICS coverage | IT only in most cases | IT, cloud, and OT/ICS from a single platform |

The AI Factor: Why the Gap Is Widening

AI is accelerating the threat landscape in ways that make legacy BAS architectures increasingly irrelevant. Adversaries are using AI to generate polymorphic payloads that evade signature-based detection, automate multi-stage attack chains that adapt in real time, and craft social engineering at a scale that would have been impossible two years ago.

Legacy BAS, built on static scripts and known signatures, can't test against threats that evolve faster than the vendor can update the library. AEV platforms with AI-assisted campaign generation close that gap — enabling teams to build and deploy new emulation campaigns within hours of a CISA advisory or threat intelligence update, using natural language descriptions to generate realistic multi-stage attack paths tailored to their environment and threat landscape.

Don't Buy a Rebrand. Buy the Architecture.

As CTEM becomes the operating model for mature security programs, AEV is the validation mechanism that makes it real. Legacy BAS vendors adding "AEV" to their marketing page doesn't change the underlying architecture — and the architecture determines everything: what you can test, what you can measure, and what you can prove.

When evaluating platforms, the questions that matter are simple: Does it emulate multi-stage campaigns or execute atomic tests? Does it validate the response chain or just check if an alert fired? Does it measure MTTD and MTTR or only technique coverage? Does it test across IT, cloud, and OT or just endpoints? The answers will tell you whether you're buying AEV or buying a BAS rebrand with a new logo.

See AEV Done Right

SCYTHE: Purpose-Built for Adversarial Exposure Validation

SCYTHE's AEV platform delivers multi-stage adversary campaign emulation, full response chain validation, and continuous measurement of MTTD, MTTR, and detection coverage — across IT, cloud, and OT/ICS environments. See the full comparison on our AEV vs. BAS vs. Pen Testing page.

Schedule a DemoLatest Posts

Related Articles

The Future of Proactive Cybersecurity: Key Trends to Watch in 2025

In today’s cybersecurity landscape, standing still is not an option. Threat actors are becoming...

How One PowerShell Command Give's Attackers Complete Control

PowerShell is one of the most powerful tools in the Windows ecosystem. It’s used extensively by...

.png)

.png)