.jpg?width=352&name=601c2f7bfc8d6511ebfc83d6_Emulation%20(1).jpg)

Cybersecurity Testing Evolves: Pen Testing, BAS, BAS+, & AEV

Opinion Controls Validation Red Teaming Pen testing asks "can someone get in?" BAS asks "do my controls detect this technique?" AEV asks the question ...

Marc Brown

Pen testing asks "can someone get in?" BAS asks "do my controls detect this technique?" AEV asks the question that actually matters: "when a real adversary targets us, does our entire defensive operation — people, process, and technology — work?"

SCYTHE · Updated April 2026 · 14 min read

Every security team makes assumptions. That the EDR catches lateral movement. That the SIEM fires when credentials are dumped. That the SOC responds when an alert hits the queue. That the IR playbook actually works under pressure.

The question isn't whether those controls are configured — it's whether they actually work against the adversaries targeting your organization right now. Penetration testing, Breach and Attack Simulation (BAS), and Adversarial Exposure Validation (AEV) each attempt to answer that question. They do it in very different ways, with very different outcomes.

This post traces the evolution from pen testing through BAS to AEV — not as a replacement narrative where each generation kills the last, but as a maturity progression where each approach serves a distinct purpose. Understanding where each excels and where each falls short is essential for building a security validation program that produces evidence, not assumptions.

The Core Question

The evolution of cybersecurity testing tracks a single progression: from "do our controls exist?" to "do they actually work — and how do we prove it continuously?" Each generation gets closer to answering that question for real.

Penetration Testing: The Foundation

Penetration testing is where most security programs begin their validation journey. A skilled professional — or team — spends one to four weeks attempting to breach your environment using real attacker techniques and human creativity. They find vulnerabilities, chain them into attack paths, and deliver a report documenting what they exploited, how far they got, and what should be fixed.

Pentesting's strength is human judgment. A good pentester finds complex, chained attack paths that automated tools miss. They think creatively, adapt to defenses, and uncover vulnerabilities that scanners can't reach. For compliance mandates — PCI DSS, SOC 2, ISO 27001 — pentesting remains a required deliverable, and for good reason: it provides deep, point-in-time assurance that an expert tried to break in and documented what they found.

Where pentesting falls short

Results are valid for the day the test ended — not six months later. Pentests can't tell you whether your detections fired, only if someone got in. Cost and logistics prevent continuous coverage. And critically, pentesting concentrates effort on the left side of the kill chain — reconnaissance and initial access — while your security investments live on the right side: SIEM rules, EDR policies, detection playbooks, SOC workflows, and your team. A pentest that proves someone can get in doesn't tell you whether anyone noticed.

Breach & Attack Simulation (BAS): Automation Arrives

BAS emerged to solve pentesting's biggest limitation: cadence. If you can only test once or twice a year, you're blind to every change that happens between engagements — new systems, configuration drift, rule modifications, staff turnover. BAS automates the execution of known attack techniques, typically mapped to MITRE ATT&CK, and runs them continuously against your controls to check whether they detect or block each technique.

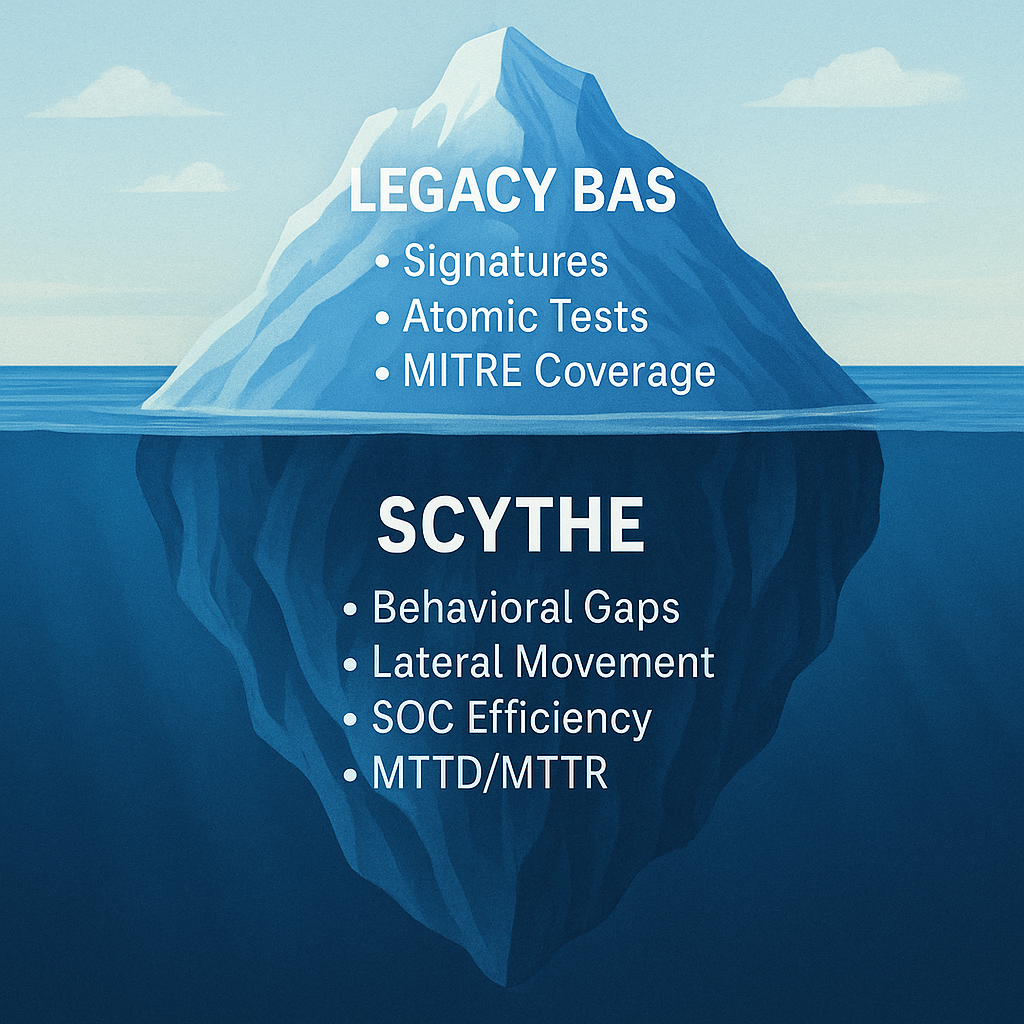

This was a meaningful step forward. For the first time, organizations could measure their ATT&CK coverage density across endpoints, network, and email at scale — not by reading vendor datasheets but by testing techniques against their actual environment. BAS tools are useful as a baseline measurement layer: they tell you which techniques your controls detect in isolation.

Where BAS falls short

BAS tests techniques in isolation using static, script-based logic — not realistic multi-stage campaigns that reflect how adversaries actually behave. A real adversary doesn't execute T1059.001 (PowerShell) and stop. They chain it with credential access, privilege escalation, lateral movement, and data staging in a sequence designed to evade detection. BAS can tell you an alert fired, but it can't tell you whether anyone responded — it measures the technology stack and ignores the human layer entirely. When your BAS dashboard says "78% ATT&CK coverage," the question you should ask is: does the SOC actually act when those alerts fire?

Adversarial Exposure Validation (AEV): The Full Picture

AEV combines the realism of penetration testing with the automation of BAS — plus the measurement infrastructure to track detection and response improvement over time. It's what happens when the industry stops asking "do our controls exist?" and starts asking "do they actually work against a real adversary campaign — and can we prove it?"

Where BAS tests individual techniques, AEV emulates real, multi-stage adversary campaigns — evasive, chained, and contextually aware. Where pentesting provides a point-in-time report, AEV runs continuously after every tool change, config update, or new system in scope. And where both BAS and pentesting stop at the technology layer, AEV validates the entire response chain: detection fires → SIEM alert generates → SOC workflow triggers → response executes.

This is what Gartner positions as the validation phase (Phase 4) of Continuous Threat Exposure Management (CTEM) — the mechanism that turns exposure management from a framework on a slide into an active security program. AEV provides the continuous, repeatable testing that generates the evidence organizations need to prove their security investments are producing results.

What AEV Measures That Nothing Else Does

AEV platforms track detection coverage, mean time to detect (MTTD), mean time to respond (MTTR), regression rate, and false negative rate — giving leadership proof that the program is improving, not just running.

MTTR isn't just a tool metric. It tells you whether your analysts caught the alert, how fast they responded, and whether your team has the training and capacity to act. A detection that fires but sits unresolved for 72 hours surfaces something no control report will: a resourcing or readiness gap that better tooling alone won't fix.

The Kill Chain Gap: Where Your Testing Effort Concentrates vs. Where Your Investments Live

This is the fundamental insight that drives the evolution from pentesting to AEV — and it's worth stating bluntly.

Traditional pen testing concentrates effort at Recon and Initial Access — the left side of the kill chain. The tester's job is to get in. But your security investments live on the right side: the SIEM rules that should fire during credential access, the EDR policies that should catch privilege escalation, the detection playbooks for lateral movement, the SOC workflows that should trigger containment before exfiltration. You're spending millions on the right side of the kill chain and testing the left side once a year.

| Kill Chain Phase | Pen Test | BAS | AEV | What Gets Validated |

| Reconnaissance | ● | ○ | ● | External exposure, information leakage |

| Initial Access | ● | ◑ | ● | Phishing, exploitation, valid credentials |

| Execution | ◑ | ● | ● | EDR detection of process execution, script interpreters |

| Persistence | ◑ | ● | ● | Registry, scheduled tasks, service installation detection |

| Privilege Escalation | ◑ | ● | ● | Token manipulation, UAC bypass, exploit detection |

| Lateral Movement | ○ | ◑ | ● | RDP, SMB, pass-the-hash, WinRM detection + SOC response |

| Credential Access | ◑ | ● | ● | LSASS dumping, Kerberoasting, credential harvesting alerts |

| Exfiltration | ○ | ◑ | ● | Data staging, C2 comms, DLP, NDR, and SOC containment |

● Primary focus ◑ Partial coverage ○ Not typically tested

Complementary, Not Competing

The most mature security programs don't choose between pen testing, BAS, and AEV. They use each for what it does best in a layered validation program:

Annual or Semi-Annual

Penetration Testing

Compliance obligations, pre-launch assessments, and deep application security reviews where human creativity and judgment are irreplaceable. Finds novel attack paths and satisfies auditors. Deep findings feed the AEV re-test queue.

Ongoing — Technique Library

BAS

Baseline coverage measurement across a defined technique library. Tells you which techniques your controls detect in isolation — a useful starting point for understanding ATT&CK coverage density and identifying obvious gaps.

Continuous — Change-Triggered

AEV

Continuous validation running after every change, every update, every new system in scope. Ensures pentest findings are re-tested automatically and generates ongoing evidence of effectiveness. Validates detection AND response — not just whether an alert fired.

Why the Evolution Accelerated: AI and CTEM

Two forces have compressed the timeline from "nice to have" to "operational necessity" for organizations still relying on annual pentesting alone:

AI-accelerated threats. Adversaries are using AI to generate polymorphic payloads, automate reconnaissance, craft hyper-personalized social engineering, and adapt attack chains based on the defenses they encounter. New CVEs are weaponized within hours of disclosure. The threat landscape is evolving weekly, not annually — and testing cadence must match threat cadence. Organizations that test once a year are flying blind for 364 days.

CTEM operationalization. Gartner's Continuous Threat Exposure Management framework has moved from trend to operational reality. Phase 4 — Validation — explicitly requires that organizations test whether their controls work against real-world threats, not just confirm that rules exist. AEV is the mechanism that operationalizes CTEM's validation phase, providing the continuous, repeatable testing that turns the framework from a strategic diagram into an active security program with measurable outcomes.

The Full Comparison

| Dimension | Pen Testing | BAS | AEV |

| Approach | Manual, human-driven | Automated, script-based | Automated + human, campaign-based |

| Cadence | Annual / semi-annual | Continuous (techniques) | Continuous + change-triggered |

| Attack realism | High — human creativity | Low — isolated atomic tests | High — multi-stage campaigns |

| Kill chain coverage | Left side (recon, access) | Middle (individual techniques) | Full chain — access to exfiltration |

| What it measures | Exploitability | Control detection (tech only) | Detection + response (people, process, tech) |

| MTTD / MTTR tracking | — | — | ✓ |

| Improvement tracking | Year-over-year only | Coverage density over time | Coverage + MTTD + MTTR + regression |

| Team collaboration | External, siloed | Automated, no team interaction | Red + blue + purple team workflows |

| Compliance value | Required by most frameworks | Supports control evidence | Satisfies + exceeds pentest requirements |

| CTEM alignment | Point-in-time input | Partial — technique testing | Full Phase 4 operationalization |

Where Does Your Program Sit?

If you're still relying solely on annual pentests, you're answering a 2005 question with 2005 methodology. If you've adopted BAS, you've automated the measurement — but you're measuring technique coverage, not organizational readiness. If you're ready to measure whether your defenses actually work against real adversary campaigns — people, process, and technology together, continuously — then AEV is where the evolution has been heading all along.

The question was never "which approach is best." The question is: where is your program on this maturity curve, and what does the next step look like?

See the Full Comparison

AEV vs. BAS vs. Penetration Testing — In Depth

For the interactive comparison with detailed breakdowns, visit our AEV vs. BAS vs. Penetration Testing page. To see how AEV fits into your offensive maturity roadmap, download the Offensive Cybersecurity Maturity eBook.

Schedule a DemoLatest Posts

Related Articles

.jpg?width=352&name=601c2f7bfc8d6511ebfc83d6_Emulation%20(1).jpg)

How One PowerShell Command Give's Attackers Complete Control

PowerShell is one of the most powerful tools in the Windows ecosystem. It’s used extensively by...

The Difference Between Cybersecurity Simulation vs Cybersecurity Emulation

Opinion Controls Validation Red Teaming Purple Teaming Simulation tools test what's already known....

.png)

.png)